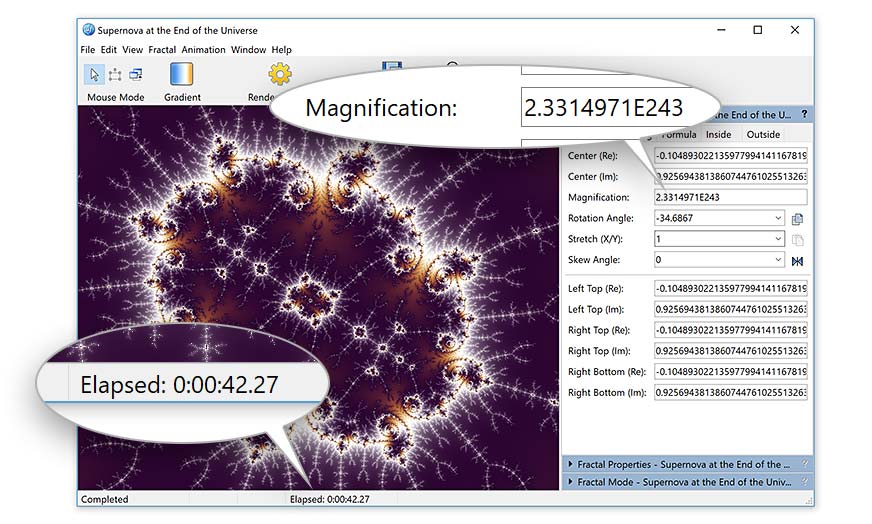

You can also try disabling use_checkpoints if you have extra VRAM, since it will render a bit faster (but uses more VRAM since it doesn't save intermediary 'checkpoints' to disk). This redditor did a nice comparison, and there are many more "studies" for which models to use - you can google around, there are new articles/studies being posted daily. I constantly change which models I'm using depending on what I'm going for. It can take a lot of experimentation to find what exactly tickles your fancy. In general, VIT = more realistic, RN = more artistic. I always have Task Manager open to monitor VRAM usage. What exactly will fit depends on your output resolution and requires a lot of trial and error. I personally like to use multiple VIT and RN models just to fill up VRAM. I'd start with VITL14 and add one or more RN types. Check out the Disco Diffusion or VoC Discord - people post their works in there and often you will see results that make you wonder if they're cheating ) I've been saving money and waiting impatiently for 4090s to drop. pixel art, medieval style, monochromatic, etc.Īlso the results vary drastically if you have enough VRAM to load more models e.g. On top of that, VoC has been adding support for diffusion models (of which I'm training my own), but there are new ones added constantly as more and more people build models for e.g. I'm really looking forward to Stable Diffusion releasing their models, I know VoC will add those models as soon as they are available. I have a google drive full of docs from my own studies, comparing parameter values, models, prompts, etc. It takes longer, the results are often less realistic compared to Midjourney, Dall-E (1/2) or Stable Diffusion (which I've been toying with for a few weeks), but it's somehow much more satisfying having to wait xx minutes for a render to complete, running on your own local PC, not having to use bots with 1000 other people in a channel spamming their prompts, and having TONS of parameters to play around with. But right now we have an interesting competition when different AI have surprising strengths and weaknesses and there's a lot of reason in trying them all.Īre they giving out trials without an invite now? I was invited from someone who was paying, became addicted by the end of my trial, subscribed for a month, then gave out invites to friends, some of which ended up also paying - not a bad business model! It was bad timing though, the day after I joined, I discovered Disco Diffusion and haven't stopped rendering since (roughly 10k images rendered, mostly for animations).

Maybe in the future we will have a universal winner AI that is the best at any style of pictures that you can imagine. I am a logo designer and I am already using AI a lot to produce sketches and ideas for commercial logos, and right now the free and publicly available Craiyon is head and shoulders better at that then Stable Diffusion. The compositions are worse, it misses a lot of requested objects or even misses the idea entirely.Īlso as good as it is at complex artistic illustrations, it is as bad at minimalistic and simple ones, like logos and icons. Stable Diffusion with all its sophistication is much worse at those and is struggling to interpret a lot of prompts that the free and public Craiyon is great with.

We've all seen the meme pictures made in Craiyon (previously Dalle-mini) of photoshop-collage-like visual jokes. If you are looking for modern artistic illustrations (like the stuff that you would find on the front page of Artstation) - it's state of the art, better in my opinion then Dalle-2 and Midjourney.īut, the interesting thing is that while it is so good in producing detailed artworks and matching the styles of popular artists, it's surprisingly weak at other things, like interpreting complex original prompts. Stable Diffusion is mind-blowingly good at some things.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed